NCBI's GEO database of gene expression data is a great resource, but its records are very open ended. This lack of rigidity was perhaps necessary to accommodate the variety of measurement technologies, but makes getting data out a little tricky. But, all that flexibility is a curse from the point of view of extracting data. The scripts I end up with are not general parsers for GEO data, but will need to be adapted to the specifics of other datasets.

Note: It could be that I'm doing things the hard way. Maybe there's an easier way.

A GEO record consists of a platform, which describes (for example) a microarray and its probes, and series of samples. In this example, we need to do a join between the platform and the sample records to end up with a matrix of the form (seq, strand, start, end, value1, value2, ..., valueN) where the value1 column holds measurements from the first sample and so on. If we do that, we'll have coordinates on the genome and values for each measurement. My goal is to feed data into a genome browser known as HeebieGB with a stop-over in R along the way.

Merging on a common key is only slightly complicated, but tiling arrays are big (~244,000 probes in this case). I hesitate to try merging several 244K row tables in memory. Database engines are made for this sort of thing, so I'll use SQLite to get this done and Python to script the whole process.

I like to start python scripts with a template similar to Guido's main function, except that I prefer optparse to getopt. An --overwrite option will force the user to be conscious of overwriting files.

import sys

from optparse import OptionParser

def main():

usage = "%prog [options] input_file sqlite_db_file"

parser = OptionParser(usage=usage)

parser.add_option("-o", "--overwrite", dest="overwrite", default=False, action="store_true",

help="if output db file exists, overwrite it")

(options, args) = parser.parse_args()

if len(args) < 2:

parser.error("missing required arguments.")

exit(2)

input_filename = args[0]

db_filename = args[1]

if __name__ == "__main__":

sys.exit(main())

GEO records a bunch of descriptive data about each sample, some of which we want. I've read that storing arbitrary key-value pairs in a relational DB is considered bad by some. But, I'm going to do it anyway. The entity attributes will go in a table called attributes whose schema is (entity_id, key, value).

The function parse_platform_table pulls the platform data from a tab-separated section in the SOFT file into a table with a schema something like this: (id, sequence, strand, start, end). There's also a tab-separated section for each of the samples that refers back to its platform, so I extract that in a similar manner in parse_sample_table. It's easiest to start out with each sample in its own table, even though that's not really what we want.

The complete script -also available from SVN here- ends up like this:

import sys

from optparse import OptionParser

import re

import os

import os.path

import sqlite3

# GEO SOFT format is documented here:

# http://www.ncbi.nlm.nih.gov/projects/geo/info/soft2.html#SOFTformat

# ID field in platform joins with ID_REF field in samples

entity = re.compile(r'\^(\S+) = (.+)')

kvp = re.compile(r'!(\S+) = (.+)')

STATE_START = 0

STATE_IN_SERIES = 1001

STATE_IN_PLATFORM = 1002

STATE_IN_SAMPLE = 1003

def overwrite(name):

if os.path.exists(name):

os.remove(name)

return True

return False

def parse_series_file(file, conn):

entity_id = None

state = STATE_START

# create an attributes table

try:

cursor = conn.cursor()

cursor.execute('create table attributes (entity_id, key, value);')

conn.commit()

cursor.close()

finally:

cursor.close()

for line in file:

line = line.strip()

# read entity tags

if line.startswith('^'):

m = entity.match(line)

if m:

entity_type = m.group(1)

entity_id = m.group(2)

print(entity_id)

if entity_type == 'SERIES':

state = STATE_IN_SERIES

elif entity_type == 'PLATFORM':

state = STATE_IN_PLATFORM

elif entity_type == 'SAMPLE':

state = STATE_IN_SAMPLE

# read attribute key-value pairs and tab-separated tables

elif line.startswith('!'):

m = kvp.match(line)

if m:

key = m.group(1)

value = m.group(2)

handle_attribute(conn, entity_id, key, value)

elif state==STATE_IN_PLATFORM and line=='!platform_table_begin':

parse_platform_table(file, conn, entity_id)

elif state==STATE_IN_SAMPLE and line=='!sample_table_begin':

parse_sample_table(file, conn, entity_id)

def parse_platform_table(file, conn, platform_id):

"""

Read the tab-separated platform section of a SOFT file and store the ID,

sequence, strand, start, and end columns in a SQLite database.

file: a file object open for reading

conn: a SQLite database connection

platform_id: a string identifying a GEO platform

"""

cursor = conn.cursor()

try:

# throw away line containing column headers

file.next()

# create platform table

cursor.execute('create table %s (id integer primary key not null, sequence text not null, strand not null, start integer not null, end integer not null, control_type integer);' % (platform_id))

conn.commit()

sql = 'insert into %s values(?,?,?,?,?,?)' % (platform_id)

for line in file:

line = line.strip('\n')

if (line.strip() == '!platform_table_end'):

break

fields = line.split("\t")

cursor.execute(sql, (int(fields[0]), fields[6], fields[10], fields[7], fields[8], fields[4]))

conn.commit()

finally:

cursor.close()

def parse_sample_table(file, conn, sample_id):

"""

Read a tab separated sample section from a SOFT file and store ID_REF and

value in a SQLite DB.

file: a file object open for reading

conn: a SQLite database connection

sample_id: a string identifying a GEO sample

"""

cursor = conn.cursor()

try:

# throw away line containing column headers

file.next()

# create sample table

cursor.execute('create table %s (id_ref integer not null, value numeric not null);' % (sample_id))

conn.commit()

sql = 'insert into %s values(?,?)' % (sample_id)

for line in file:

line = line.strip('\n')

if (line.strip() == '!sample_table_end'):

break

fields = line.split("\t")

cursor.execute(sql, (int(fields[0]), float(fields[1])))

conn.commit()

finally:

cursor.close()

def handle_attribute(conn, entity_id, key, value):

"""

Store an entity attribute in the attributes table

"""

cursor = None

try:

cursor = conn.cursor()

cursor.execute("insert into attributes values(?,?,?);", (entity_id, key, value))

conn.commit()

finally:

if cursor:

cursor.close()

def main():

usage = "%prog [options] input_file"

parser = OptionParser(usage=usage)

parser.add_option("-o", "--overwrite", dest="overwrite", default=False, action="store_true",

help="if output db file exists, overwrite it")

(options, args) = parser.parse_args()

if len(args) < 2:

parser.error("missing required arguments.")

exit(2)

input_filename = args[0]

db_filename = args[1]

if options.overwrite:

overwrite(db_filename)

input_file = None

conn = None

try:

conn = sqlite3.connect(db_filename)

input_file = open(input_filename, 'r')

parse_series_file(input_file, conn)

finally:

if input_file:

input_file.close()

if conn:

conn.close()

if __name__ == "__main__":

sys.exit(main())

The specific series I'm interested in (GSE12923) has 53 samples. The platform (GPL7255) is a custom array on Agilent's 244k feature microarrays or just short of 13 million individual features. The SOFT file is 708 MB and the script takes a good 5 or 6 minutes to ingest all that data. The next step is merging all the data into a single matrix.

This turned out to be harder than I thought. At first, I naively tried to do a big 54 way join between the platform table and all the sample tables, with an order-by to sort by chromosomal location. I let this run for a couple hours, then gave up. Sure, a big join on unindexed tables was bound to be ugly, but it only had to run once. I'm still surprised that this choked, after all, it's not that much data.

There are two ways around it. One is to index the sample tables by ID_REF and the platform table by (sequence, strand, start, end). The other is to do the big join then sort into a second table. Either takes several minutes, but it's just a one-off, so that's OK.

insert into matrix

select GPL7255.sequence, GPL7255.strand, GPL7255.start, GPL7255.end,

GSM320660.VALUE as GSM320660,

GSM320661.VALUE as GSM320661,

...GSM320712.VALUE as GSM320712

from GPL7255

join GSM320660 on GPL7255.ID = GSM320660.ID_REF

join GSM320661 on GPL7255.ID = GSM320661.ID_REF

...join GSM320712 on GPL7255.ID = GSM320712.ID_REF

where GPL7255.control_type==0 and sequence!='NA';

order by sequence, strand, start, end;

Now that we've done that, do you ever find data that doesn't need to be cleaned up a little bit?

-- swap mislabeled + and - strands (how embarrassing!)

update matrix set strand='Z' where strand='-';

update matrix set strand='-' where strand='+';

update matrix set strand='+' where strand='Z';

-- fix up sequence names

update matrix set sequence='chromosome' where sequence='chr1';

update matrix set sequence='pNRC200' where sequence='chr2';

update matrix set sequence='pNRC100' where sequence='chr3';

-- fix probes crossing the "zero" point

update matrix set start=end, end=start where end-start > 60;

That's about all the data munging I can stand for now. The rest, I'll leave for Part 2.

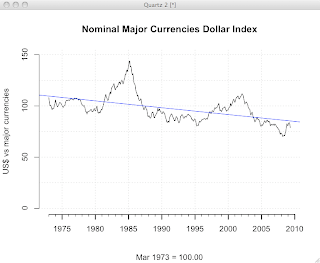

R Bloggers

R Bloggers